Agents

- Presentation - Automating the Future: Build Powerful AI Agents - Google Slides

- An LLM Agent is a software entity capable of reasoning and autonomously executing tasks.

- GitHub - viktoriasemaan/multi-agent: Examples of AI Multi-Agent Solutions ⭐ 204

- Building LLM Agents with Tool Use - YouTube

- AI Agents Are Changing AWS Cost Prediction - YouTube

Concepts

- Agent: An autonomous entity that perceives, reasons, and acts in an environment to achieve goals.

- Environment: The surrounding context or sandbox in which the agent operates and interacts.

- Perception: The process of interpreting sensory or environmental data to build situational awareness.

- State: The agent’s current internal condition or representation of the world.

- Memory: Storage of recent or historical information for continuity and learning.

- Large Language Models: Foundation models powering language understanding and generation.

- Reflex Agent: A simple type of agent that makes decisions based on predefined “condition-action” rules.

- Knowledge Base: Structured or unstructured data repository used by agents to inform decisions.

- CoT (Chain of Thought): A reasoning method where agents articulate intermediate steps for complex tasks.

- ReACT: A framework that combines step-by-step reasoning with direct environmental actions.

- Tools: APIs or external systems that agents use to augment their capabilities.

- Action: Any task or behavior executed by the agent as a result of its reasoning.

- Planning: Devising a sequence of actions to reach a specific goal.

- Orchestration: Coordinating multiple steps, tools, or agents to fulfill a task pipeline.

- Handoffs: The transfer of responsibilities or tasks between different agents.

- Multi-Agent System: A framework where multiple agents operate and collaborate in the same environment.

- Swarm: Emergent intelligent behavior from many agents following local rules without central control.

- Agent Debate: A mechanism where agents argue opposing views to refine or improve outcomes.

- Evaluation: Measuring the effectiveness or success of an agent’s actions and outcomes.

- Learning Loop: The cycle where agents improve performance by continuously learning from feedback or outcomes.

Building

How to Build an AI Agent (7-Step Blueprint)

- System Prompt - Define the agent’s goals, role, and instructions. A thoughtful prompt shapes behavior from the ground up.

- LLM Selection - Pick your reasoning engine (e.g. GPT-4, Claude, Gemini) and tune it with parameters like temperature and max tokens.

- Tools - Give your agent abilities: from calling APIs to using other agents as tools. This is where agents move from “talking” to “doing”.

- Memory - Short-term and long-term memory (episodic, vector DBs, file stores) allow agents to remember, learn, and personalize over time.

- Orchestration - This is the brain behind the brain — workflows, triggers, A2A protocols, and message queues to structure intelligent behavior.

- User Interface - A good interface (chat, voice, web) brings your agent to life. It’s not just about function — it’s about trust and usability.

- AI Evaluations - Agents need feedback loops. Measure performance, learn from failure, and improve continuously.

Agentic AI Architectures

Core agentic patterns such as ReAct, Reflection, Planning, and Tool Use

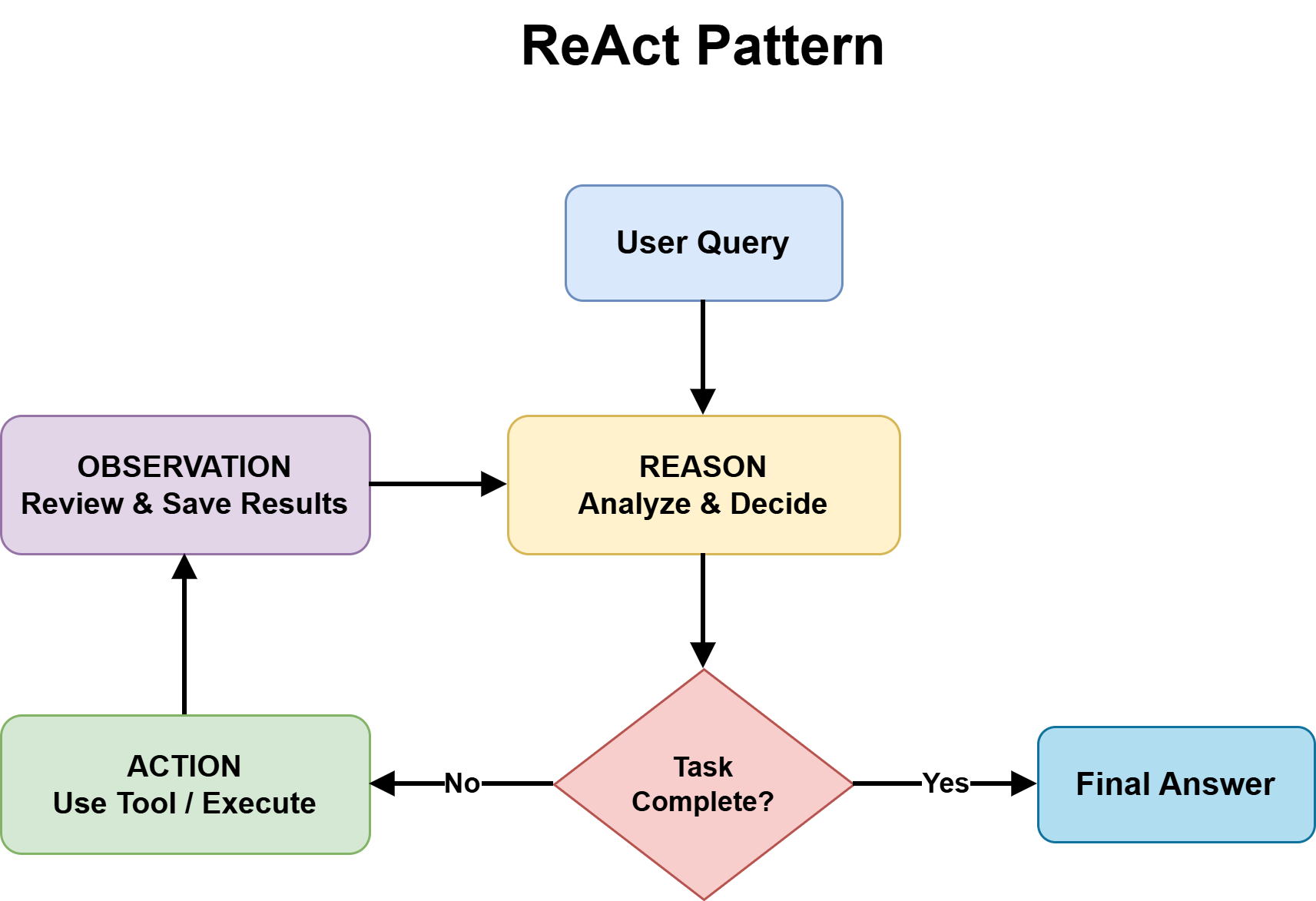

ReAct Pattern

ReAct — Reasoning and Acting — is the most foundational agentic design pattern and the right default for most complex, unpredictable tasks. It combines chain-of-thought reasoning with external tool use in a continuous feedback loop.

The structure alternates between three phases:

- Thought: the agent reasons about what to do next

- Action: the agent invokes a tool, calls an API, or runs code

- Observation: the agent processes the result and updates its plan

This repeats until the task is complete or a stopping condition is reached.

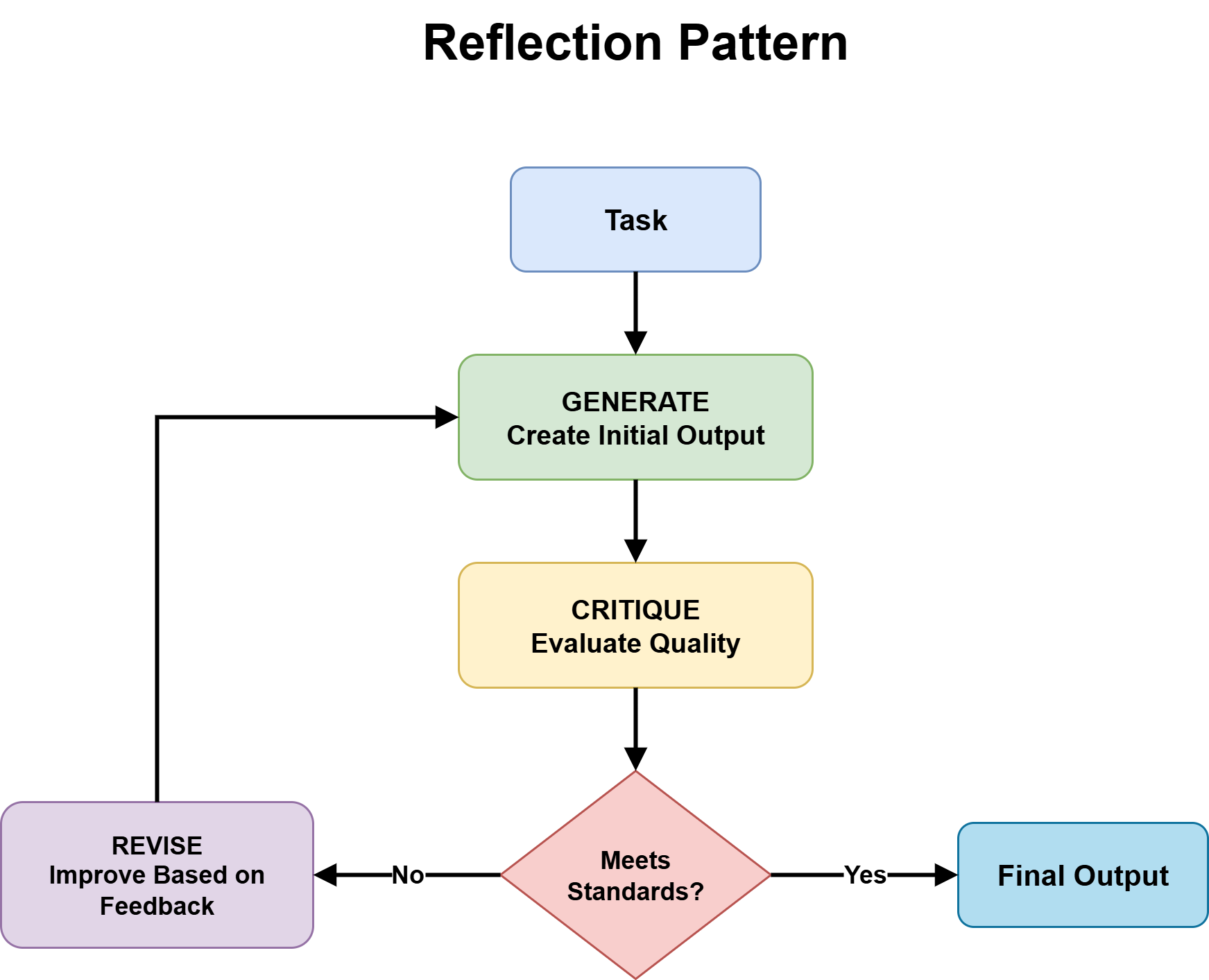

Next Step: Adding Reflection to Improve Output Quality

Reflection gives an agent the ability to evaluate and revise its own outputs before they reach the user. The structure is a generation-critique-refinement cycle: the agent produces an initial output, assesses it against defined quality criteria, and uses that assessment as the basis for revision. The cycle runs for a set number of iterations or until the output meets a defined threshold.

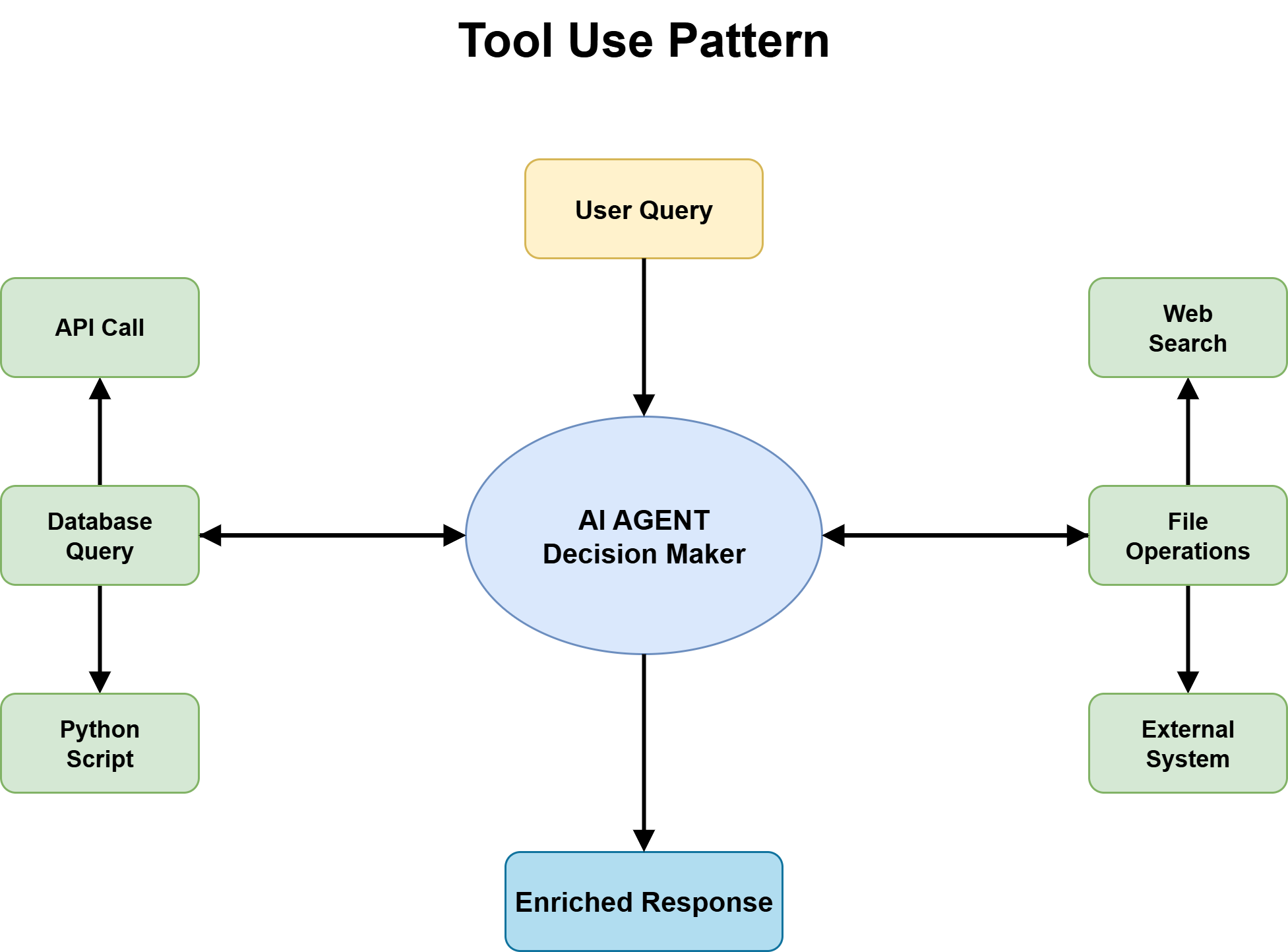

Next Step: Making Tool Use a First-Class Architectural Decision

Tool use is the pattern that turns an agent from a knowledge system into an action system. Without it, an agent has no current information, no access to external systems, and no ability to trigger actions in the real world. With it, an agent can call APIs, query databases, execute code, retrieve documents, and interact with software platforms. For almost every production agent handling real-world tasks, tool use is the foundation everything else builds upon.

Planning

Planning is the pattern for tasks where complexity or coordination requirements are high enough that ad-hoc reasoning through a ReAct loop is not sufficient. Where ReAct improvises step by step, planning breaks the goal into ordered subtasks with explicit dependencies before execution begins.

There are two broad implementations:

- Plan-and-Execute: an LLM generates a complete task plan, then a separate execution layer works through the steps.

- Adaptive Planning: the agent generates a partial plan, executes it, and re-evaluates before generating the next steps.

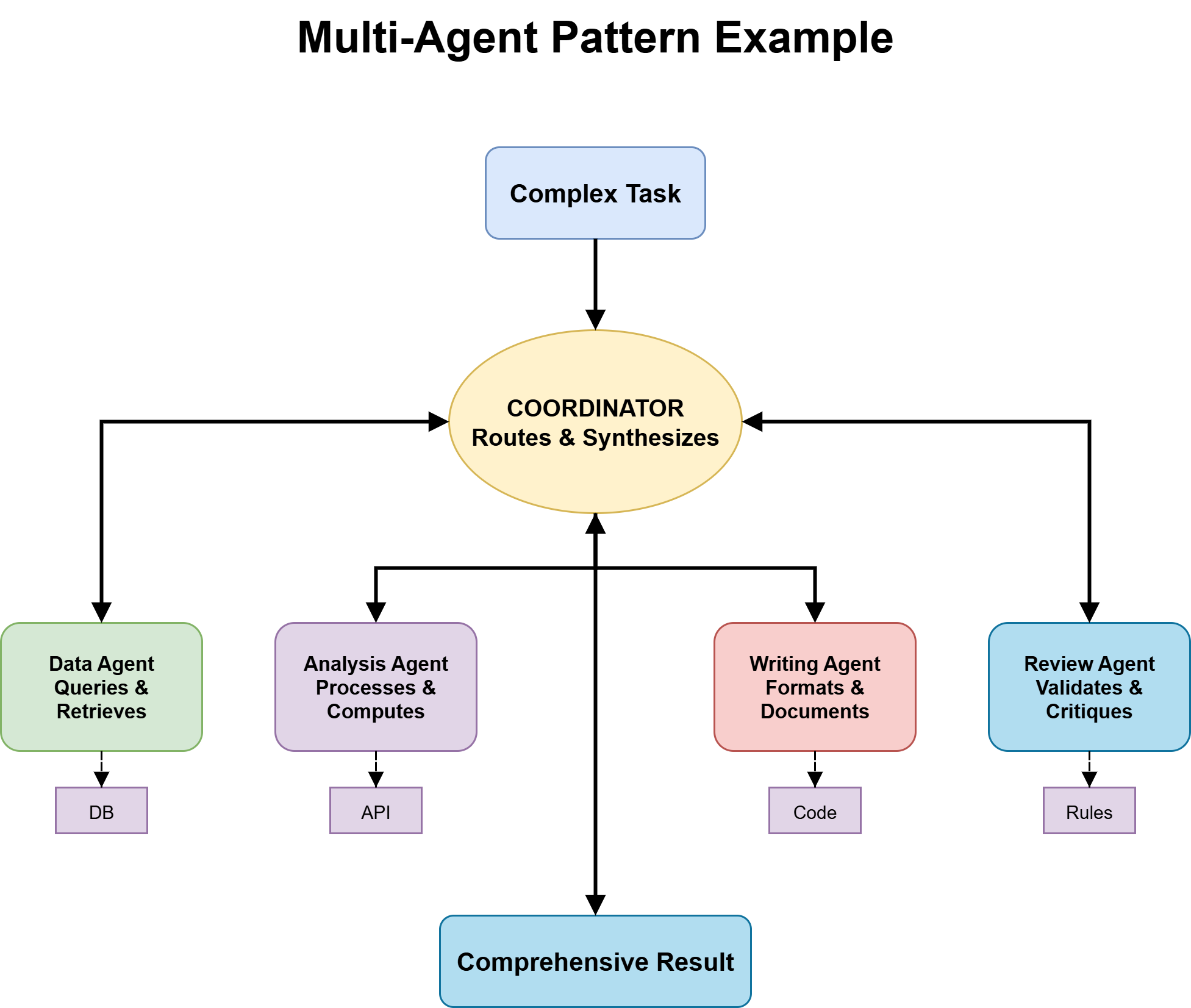

Multi-Agent Collaboration

Multi-agent systems distribute work across specialized agents, each with focused expertise, a specific tool set, and a clearly defined role. A coordinator manages routing and synthesis; specialists handle what they are optimized for.

The benefits are real — better output quality, independent improvability of each agent, and more scalable architecture — but so is the coordination complexity. Getting this right requires answering key questions early.

Ownership — which agent has write authority over shared state — must be defined explicitly. Routing logic determines whether the coordinator uses an LLM or deterministic rules. Most production systems use a hybrid approach. Orchestration topology shapes how agents interact:

The Roadmap to Mastering Agentic AI Design Patterns

Examples / Learning

- I built an agent that tells you exactly how to sell to anyone - just from their name. Here's what it does: → Takes a name as input (provide company too if common name) → Finds their entire digital… | Ethan Kinnan | 1,490 comments

- GitHub - NirDiamant/agents-towards-production: This repository delivers end-to-end, code-first tutorials covering every layer of production-grade GenAI agents, guiding you from spark to scale with proven patterns and reusable blueprints for real-world launches. ⭐ 19k

References

- Agents | Kaggle

- Agentic Design Patterns - Antonio Gulli - Google Docs

- Building effective agents \ Anthropic

- Google's Blueprint to Building Powerful Agents - YouTube

- Get started | Genkit | Firebase

- oscar - Git at Google

- LLM Agents - Explained! - YouTube

- Agents 101: How to build your first AI Agent in 30 minutes!⚡️ - DEV Community

- Generative AI Fine Tuning LLM Models Crash Course - YouTube

- Real Terms for AI - YouTube

- Multi-Agent Portfolio Collaboration with OpenAI Agents SDK

- [2505.10468] AI Agents vs. Agentic AI: A Conceptual Taxonomy, Applications and Challenges

- How we built our multi-agent research system \ Anthropic

- Enabling customers to deliver production-ready AI agents at scale | Artificial Intelligence

- Mixture-of-Agents (MoA): A Breakthrough in LLM Performance - MarkTechPost

- Design Systems And AI: Why MCP Servers Are The Unlock | Figma Blog

- Agentic Systems 101: Fundamentals, Building Blocks, and How to Build Them (Part A)